I built myself an editorial pipeline

I built three AI agents to help me write a streaming newsletter. They disappoint me every day. I keep them anyway. That’s the whole article, but it deserves more than three sentences, so here we go.

I write about streaming for a living. Mostly solo. The Substack newsletter (French analysis plus this English builder log), a narrative newsletter on LinkedIn, LinkedIn long-form posts, reports on Lens, and an auto-updating site at verticaldrama.tv for one of my verticals. To do that work seriously I need to read a lot, and I need to spot patterns before they’re obvious. That doesn’t fit in 24 hours when you’re one person.

Last year, early on, I made it worse. I published a LinkedIn post claiming someone in the creator economy had just signed with an agency. I asked Gemini, took the answer at face value, hit publish. The deal didn’t exist. Big big fail on me. I was stupid.

The lesson wasn’t really about Gemini. I was treating an LLM like a wire service. An LLM giving you a confident answer is not the same thing as a fact existing in the world. So I started double-checking everything (which slows you down, which is fine), and then I hit a different wall.

Volume.

I’m one person. I can only read so much. And what I’m actually after isn’t the news, it’s weak signals. Two reputable outlets mentioning a topic that nobody else is covering. A pattern that shows up twice in two weeks before anybody names it. The catch is that you can’t tell what’s noise until you’ve read it. So either you read everything, which you can’t, or you skip stuff, which means you miss the signal that mattered. Couldn’t afford to hire someone either.

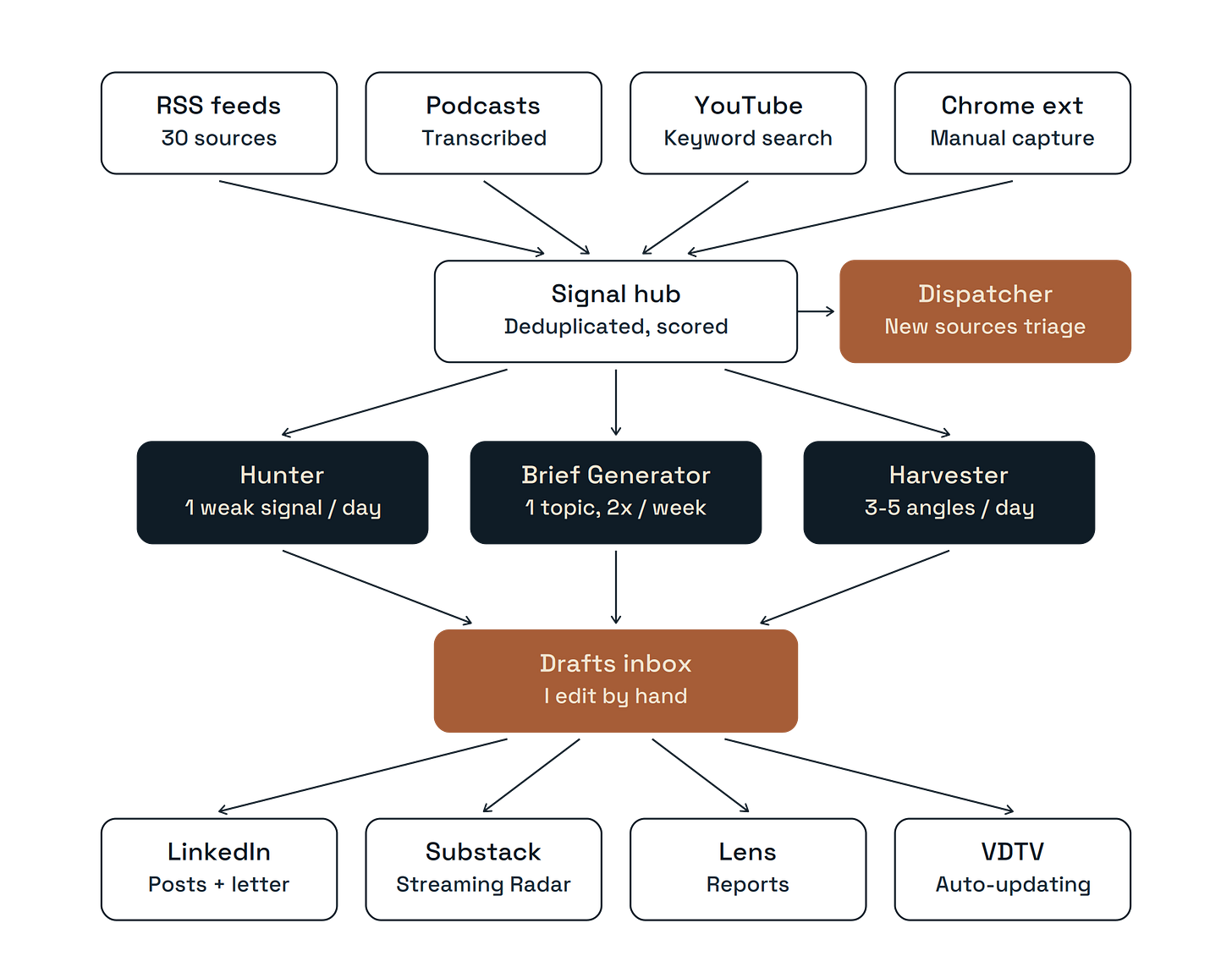

So I started building. In this order.

First an MCP server, as a joke. Then I started using it to write reports and the joke became serious. The MCP let me throw articles in via a Chrome extension, ask questions of the corpus from Claude Desktop, pull anything I needed back out. Filing cabinet plus research assistant. The bottleneck was still me, feeding it one URL at a time. So I added a tool I called Signal: I give it keywords, it hunts in external search engines, brings back sources I haven’t seen yet. Some signals were gold. Some were pure noise. So I added a way to score them: drop the dud queries, double down on the good ones.

Once Signal worked, I had volume. So I built a Brief Generator. Twice a week it picks one topic, digs into it across 72 hours of accumulated signals, and sends me three drafts: a long LinkedIn post, a newsletter section, a vertical drama capsule if relevant.

Then YouTube and podcasts started feeding the pile, and the Brief (twice a week) couldn’t keep up. So I built a Harvester. Daily, broader. Three to five publishable angles in my inbox before 9am Paris time, including transcripts from videos I’d never watch otherwise.

But neither the Brief nor the Harvester were great at weak signals, because both work off “what’s hot in the last 72 hours.” Weak signals are by definition not hot.

I figured out the Hunter in voice mode with Claude. (That’s how I build most things now: I rant for 5 to 10 minutes, the model summarizes back, I read the summary, I see what holds up. This article was started the same way.) The Hunter wakes up at 6am UTC, reads the last 72 hours, finds me one thing. Just one. A weak signal mentioned by at least two different source types, that I haven’t covered yet. Memo at breakfast. Three buttons in the email: promote, watch, reject.

Now. Here’s the part most articles about AI workflows skip.

The agents disappoint me all the time. Not because they’re broken. Because they’re not me.

A year of running this, and here’s what I’ve actually figured out. There are two things AI does for me that I genuinely couldn’t do alone. One: volume sorting. Once I’ve shown an agent ten things I consider good and ten I consider noise, it gets very good at flagging the next thousand. That’s real. Two: it cracks open languages I don’t read. I’ve published on Chinese microdrama platforms (ReelShort, DramaBox, Crazy Maple), on Brazilian vertical apps, on payment friction in markets where I don’t speak the language. Four years ago, that was out of reach for me. It isn’t anymore. Live translation plus an agent that can read 200 sources in an afternoon changes what’s possible for one person.

But here’s what these agents have never done: have a new idea. Not once. They produce patchworks. Recombinations. Plausible-looking syntheses of things they’ve already seen. The actual epiphanies, the moments where a weak signal turns into “oh wait, this is actually about X”, happen when I’m sitting there reading what the Hunter brought back and arguing with it. Like I’d argue with a colleague. The agent surfaces something I’d never have found, I push back on its framing, it pushes back on mine, and somewhere in the conversation something tilts.

Maybe that only works for me. I’m not going to claim it generalizes. But it’s how the value actually shows up in my workflow, and it’s nothing like “AI writes the article and the human reviews it.” It’s much closer to: AI brings the raw material I couldn’t reach, then we talk.

Which is why the “AI will replace journalists” framing has always sounded off to me. The agents go faster than I do. They reach further than I do. But the moment I stop holding the wheel, they go off the road. Hard. Every time. That’s not a flaw I expect to be patched in the next model. It’s the shape of the tool.

So nothing publishes automatically. Everything lands as a draft. I edit by hand. Which is the part I actually like (I write fiction on the side, the writing is the part I look forward to, the rest is just feeding me good raw material).

Five publishing surfaces at the end of the chain: long posts on LinkedIn, a small narrative newsletter I run on LinkedIn, the main Streaming Radar newsletter on Substack (French analysis plus this English builder log), Lens, and verticaldrama.tv, the separate site that came out of all the vertical drama signals I was throwing away because they didn’t fit the newsletter cadence.

The whole thing runs on Cloudflare Workers, GitHub Actions, and the Anthropic API. About a hundred bucks a month in credits. Not finished either. Two big sources I can’t get to: Twitter, because the API is now a luxury good and my application got rejected. And LinkedIn, which is maybe 30 to 40 percent of where I find good stuff in my day-to-day, and which is functionally locked. The data export gives you everything except the posts. Which is the one thing you’d actually want. If anyone has cracked either, please tell me. I’ll buy you a beer.

I also don’t think the way I built this is the only way. I built it with Claude because Claude is the tool I know best and Claude Code lets me move fast. There are other stacks, other tradeoffs. I’d be curious to hear them.

What can I say. The job hasn’t really changed in 25 years. Only the radar keeps changing: forum-digging in 2000, Twitter lists on a second monitor in 2007, three agents arguing with me now. I wake up, read what they found, disagree with half of it, and write.